General:

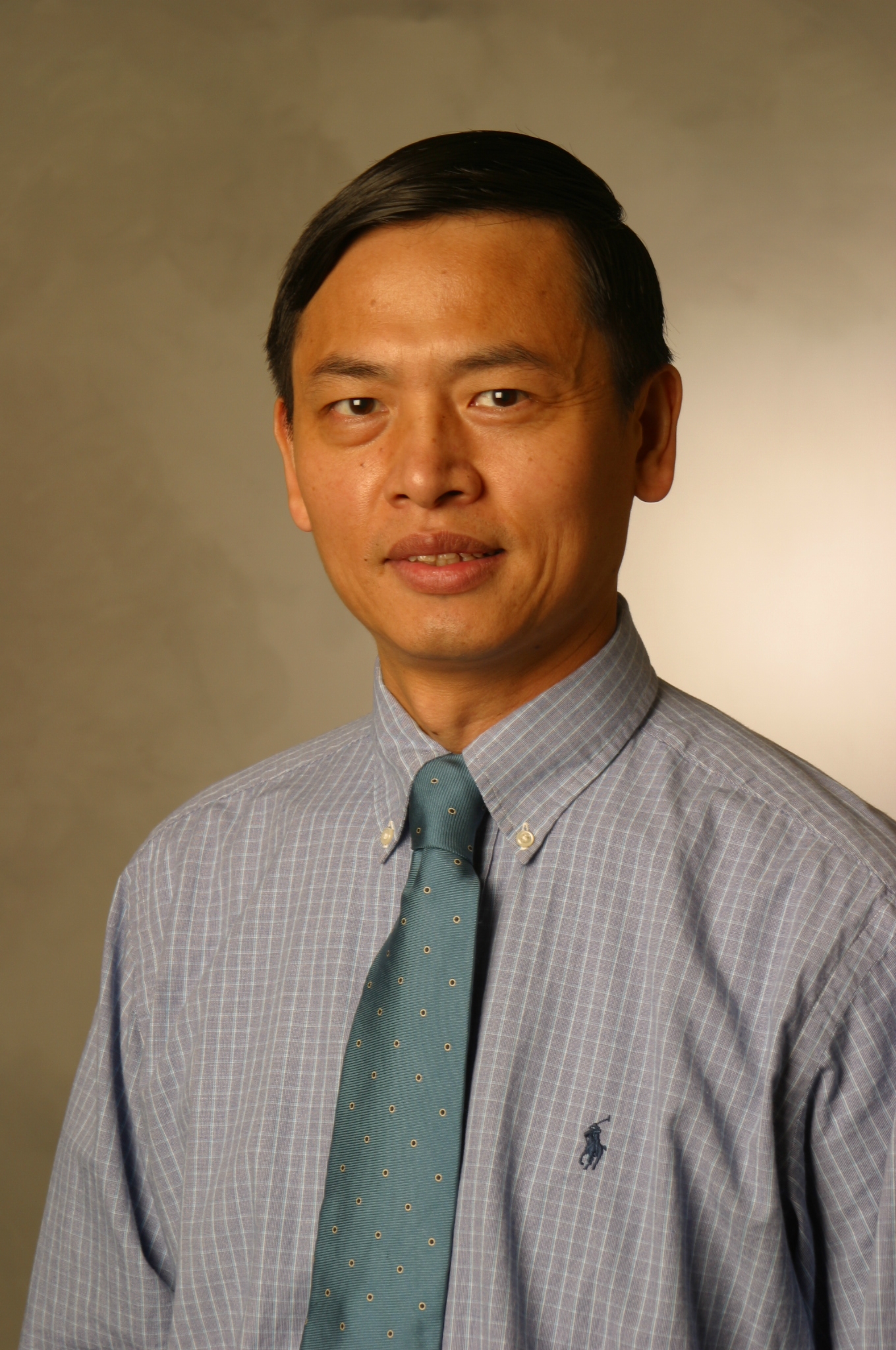

- Guofu is Frederick Bierman and James E. Spears Professor of Finance at Olin Business School,

Washington

University in St. Louis, where he joined in 1990 and has worked ever since.

Guofu Zhou (周国富) grew up in Chengdu, the capital of

Sichuan province located in the Southwestern part of China.

Chengdu has been a cultural center since 400 BC as witnessed by Jinsha Site Museum, built on an ancient Kingdom ground

from 2700 BC!, which displays "real old" gold, jade, bronze, stone and ivory art works. Chengdu

is one of the most beautiful

cities in China, and here are some of the breath catching

Photos around Chengdu where it is possible to drink fresh water

directly from Yangtze river, which is the longest river in the world.

One can visit Blue Ox Palace where Lao Tse, according to legend,

flew to Heaven in 500 BC after writing his most famous and fundamental text, in both philosophy and Taoism,

Tao Te Ching: The Way;

have a 10-person party on the toe of a gigantic Buddha statue overseeing

the merging of three rivers; climb a strenuous 40 mile stone

path, with the alternative nowadays by taking a cable car in 1 hour or so!, to Buddhism's Golden Summit on top of

Mt. Emei (the highest of the Four Most Sacred Buddhist Mountains in China) to wonder and ponder while being fascinated by

"Buddha's halo," which occurs when the sun rays reflected off

clouds creating a circle of scintillating, multi-hued radiance; and

search the Way via meditation on

Qing Chen mountain (the #1 Taoism mount; 1.25 hours away from Chengdu).

Within the city, one can explore Chengdu Panda Base (the only place in the world where there are

many wild pandas visible closely by tourists);

walk on the zigzag corridors of Du Fu Park (named after China's Greatest

Realism poet, 712-770 AD, who wrote more than 200 poems there) around

flourishing ancient trees, plum groves, and lotus ponds; stroll on Jinli Pedestrian Street

passing alleys of Chinese cultural dance, craft, cuisine and tea gardens; see some real artifacts and stone figures of the famous "Three Kingdoms" on display

at Wuhou Memorial Temple; and then

recharge oneself immensely with spicy Sichuan foods,

hot pots, and small dishes (a set of 20 different kinds

is not uncommon for a single person!)

A Glimpse!

♙ ♙ ♖ Working Papers

-- Publications (data & codes) -- Academic Contributions

-- Courses

-- Book

-- Other Links ♖ ♙ ♙

- E-mail: zhou@wustl.edu

- Office: Room 207, Simon Hall (314-935-6384)

- Mail: Campus Box 1133,

1 Brookings Dr., St. Louis, MO 63130

- Degrees:

- Ph.D., 1990, Duke University

- M.A., 1987, Duke University

- M.S.,

1985, Academia Sinica

- B.S., 1982, Chengdu University of Technology

- Curriculum Vita:

pdf

- Google Citations: 16,822

- SSRN Downloads: 192,741 (top 57 out of 1,779,498 researchers worldwide)

- Research Areas: Empirical asset pricing, Applied AI and machine learning, asset

allocation, portfolio optimization, anomalies, predictability, technical analysis,

behavioral finance, household finance, mutual funds, hedge funds, options, Bayesian inference, and Chinese financial markets.

- Courses Taught: Data Analysis for Investments;

Options and Futures;

Derivatives;

Real Option Valuation;

Advanced Topics in Finance;

Capital Markets & Financial Management;

Mathematical Finance; Financial Economics I & II (PhDs), Research in Finance (PhDs).

♙ ♙ ♖ Working Papers

-- Publications (data & codes) -- Academic Contributions

-- Courses

-- Book

-- Other Links ♖ ♙ ♙